Chatbots Empower Consumers

And That’s A Good Thing

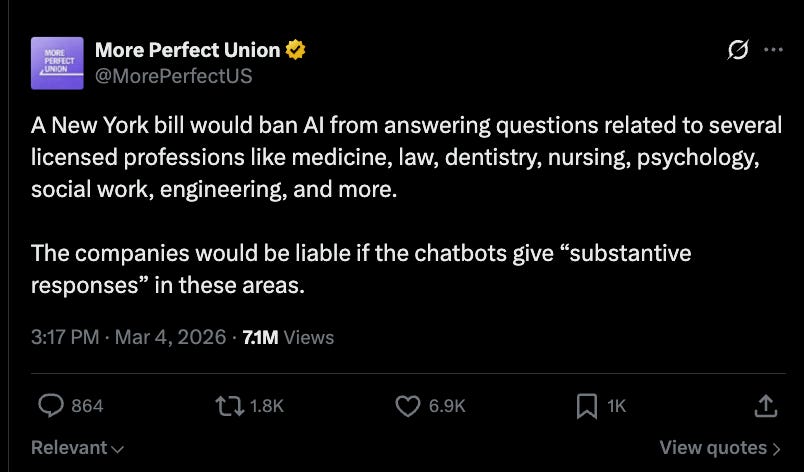

The next time you ask an AI chatbot about a worrying symptom or a landlord dispute, New York's state legislature would like that chatbot to be effectively forced to tell you that it can't answer that question. The reason? A credentialed member of the professional class doesn't want competition. Last week, the New York state legislature took up a bill that would ban AI chatbots from providing ‘substantive’ legal or medical advice and gives users the right to sue the owners of chatbots that do so. The bill was positively highlighted online by More Perfect Union which was -to put it charitably- a puzzling choice given that, as I’ll discuss below, the bill defends anti-competitive rent-seeking that hurts working class people.

Its sponsor, State Sen. Kristen Gonzalez, explained the philosophy behind it as “people deserve real care from real people.” It’s worth pausing on that argument for a moment because it isn’t entirely off base. AI tools do sometimes give incorrect answers with total confidence. A person in a vulnerable moment could conceivably act on bad AI advice in ways that hurt them. That’s a real phenomenon and policymakers are not automatically wrong to be thinking about how to mitigate that. But...the remedy this bill prescribes doesn’t solve that problem but does deny regular people access to helpful information. If the goal were consumer protection, you’d require disclosure that users are talking to an AI chatbot (which, notably, the bill also does, and that part is fine). What you wouldn’t do is ban ‘substantive responses’ wholesale and then also hand plaintiffs’ lawyers a private right of action.

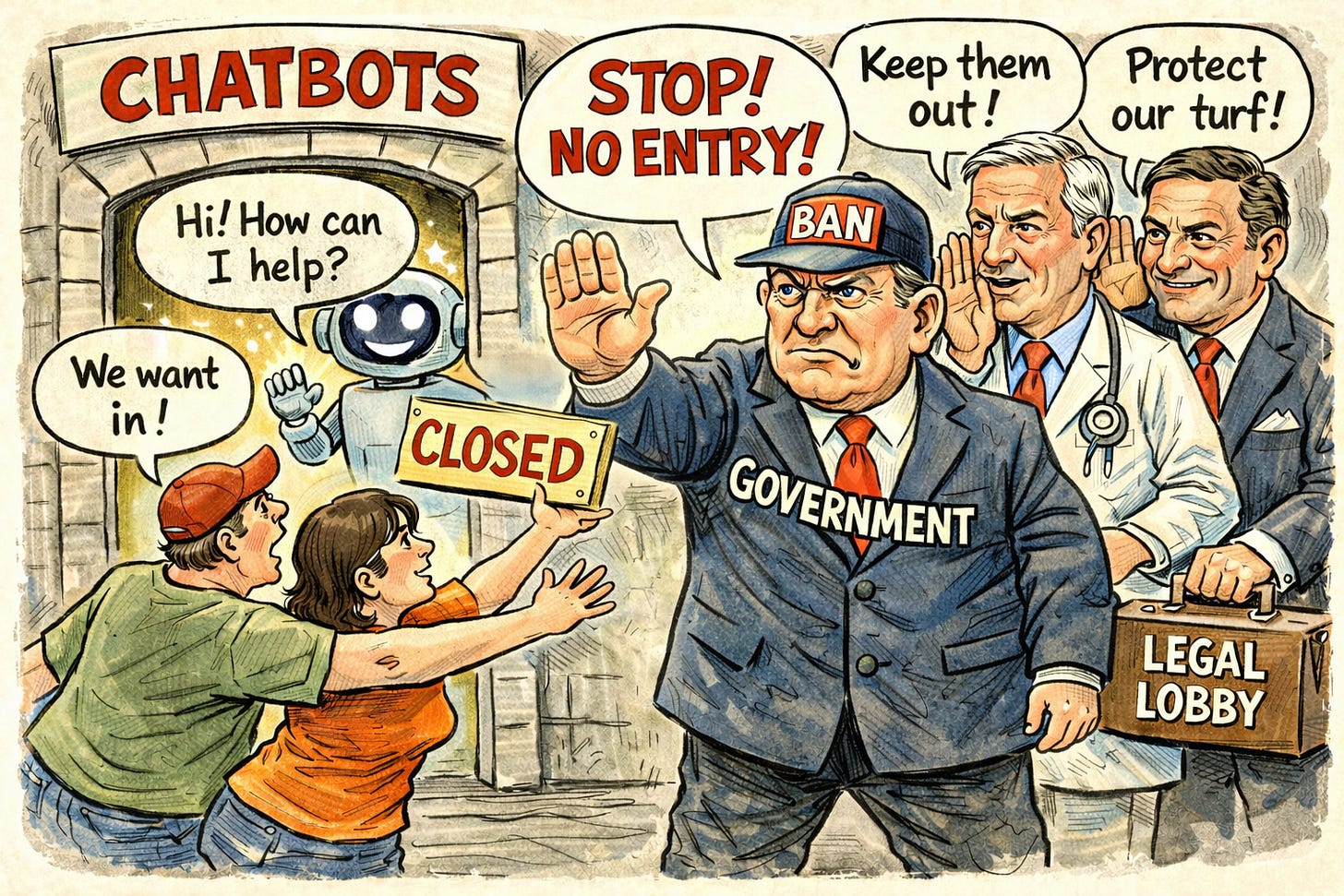

This bill pretends to be about consumer protection, but what’s underneath is something much older and much less sympathetic: a powerful professional class using the machinery of government to protect itself from competition, and it’s doing so at the expense of working-class people.

Chatbots Help Real People

Let’s be clear about what this bill actually does. It doesn’t create a world where people with legal disputes get lawyers, or where people with confusing diagnoses get doctors. It doesn’t fund legal aid clinics or expand Medicaid. It doesn’t do anything to make professional care more accessible or affordable. What it does, with surgical precision, is eliminate one of the few tools that was democratizing access to professional services. One of the professional class’s castle walls was coming down.

Think about who is actually typing medical and legal questions into an AI chatbot. It’s not the person with a concierge physician and a buddy who went to Yale Law School. It’s the person who got a scary result on a blood test and can’t get an appointment for two weeks. It’s the person whose landlord is trying to illegally withhold their security deposit and can’t afford $400 an hour to figure out what their rights are. It’s the person who has a question about a symptom their kid is having after taking a new medication and wants more information before judging to see if a four hour urgent care visit is really necessary.

For these people, the counterfactual isn’t a doctor or a lawyer. It’s Reddit. It’s WebMD, with its infamous ability to turn a headache into a brain tumor. It’s a Facebook group, or a forum thread from 2014, or nothing at all. AI isn’t replacing professional care for these people; it’s replacing a worse, less reliable, less personalized information environment that we somehow de facto decided was fine.

The bill’s supporters would presumably say they’re protecting vulnerable people from bad AI advice. But vulnerable people are already navigating a system that gives them bad information constantly, or no information at all. AI is, imperfectly but meaningfully, making that better.

Beware Three Rent-Seekers In A Trench Coat Pretending To Be A Consumer Protection

There’s a name for what’s happening here: rent-seeking. It’s the practice of using political and regulatory power to protect your market position rather than competing on merit. And the medical and legal professions have been extraordinarily good at it for a long time. While parts of our occupational licensing are about protecting consumers (we don’t want unqualified yahoos performing surgery), much of the architecture of professional licensing in America acts to limit supply, which reduces competition and keeps prices high. In effect, it acts as a kind of government-sanctioned guild system.

AI represented a genuine crack in that system. It is obviously not a replacement for professional expertise in many situations; nobody is seriously arguing that a chatbot should perform your appendectomy or argue your appeal in court. But chatbots very much can deliver a meaningful reduction in the information asymmetry that makes professionals so expensive and so necessary in the first place. When you understand your situation better, you ask better questions, and you know when you actually need to hire someone and when you don’t (which can save people a lot of money). And that’s precisely what makes AI threatening to a business model built on complexity and credentialing.

It’s worth noting that the New York bill explicitly invokes the “unauthorized practice of law” doctrine as its legal hook. That doctrine was created to protect consumers from fraudsters impersonating licensed attorneys, not to prevent people from accessing information. Stretching it to cover an AI tool that transparently identifies itself as an AI tool is a tell. It shows that the target isn’t deception per se so much as competition.

The mechanics of the bill are a mess too. The private right of action creates powerful incentives for companies to over-restrict everything that touches medicine or law. Chatbot owners won’t carefully parse whether a given response crosses into “substantive” advice. They’ll just block anything in the vicinity, which will end up turning into a near-total blackout on two of the most important information categories in people’s lives.

AI Power For Me, Paternalism for Thee

There’s a deeper principle at stake here. We adults have all been navigating complex information environments our entire lives, making judgment calls about what to trust and what to ignore, which internet stranger to believe and which to dismiss. The implicit premise of this New York bill is that you, an adult, cannot be trusted to have a conversation with a chatbot and apply your own judgment to what it tells you. If other people feel that way about themselves, they are welcome to not use chatbots. The rest of us do not want government officials deciding for us which information about our own situations we are intelligent enough to use and which is just too far above our precious little heads.

Which brings us to another related, noteworthy set of facts about this topic. The United States military uses AI to assist with targeting decisions. AI helps airlines route planes and helps emergency call services send help faster. The government is comfortable with all of that. But according to the state of New York’s Internet and Technology Committee, an AI chatbot can’t be trusted to answer my questions about vitiligo?

These two imbalances together amount to the government claiming maximal AI power for itself while seeking to constrain the AI power regular people get to access.

A Better Approach: Regulatory Sandboxes

There’s a better way to approach this, and some states are already doing it: regulatory sandboxes. These allow companies to pilot innovative products under temporarily relaxed regulatory requirements with close government oversight so that regulators and innovators can learn together what works rather than having legislators try to regulate technologies they don’t fully understand. This approach allows for iterative improvement during the testing period with the understanding that new technologies get better with use rather than requiring them to be perfect before launch. (I’ve written about regulatory sandboxes in more depth in the context of disability technology here.)

On chatbots and medicine, Utah is the most instructive example. In 2024, the state enacted its AI Policy Act, which established an Office of AI Policy with the authority to grant temporary waivers of specific laws through a regulatory sandbox. A company called Doctronic, which is developing an AI tool to handle prescription refill requests, signed one of these agreements and was granted a one-year exemption from the professional licensing rules that would otherwise prohibit an AI from making those decisions. Patients still need a doctor for new prescriptions, but can now use AI to renew existing ones from a list of 192 low-risk drugs. Health data privacy and cybersecurity laws remained fully in force throughout.

That’s the contrast in a nutshell. Utah looked at AI’s potential to expand access to healthcare and asked: how do we figure out how to do this safely? New York looked at the same thing and asked: how do we stop it? One of these is a governing philosophy that will serve its citizens well and is a model for policymakers. The other is a guild protection racket dressed up in a consumer protection Halloween costume.

-GW